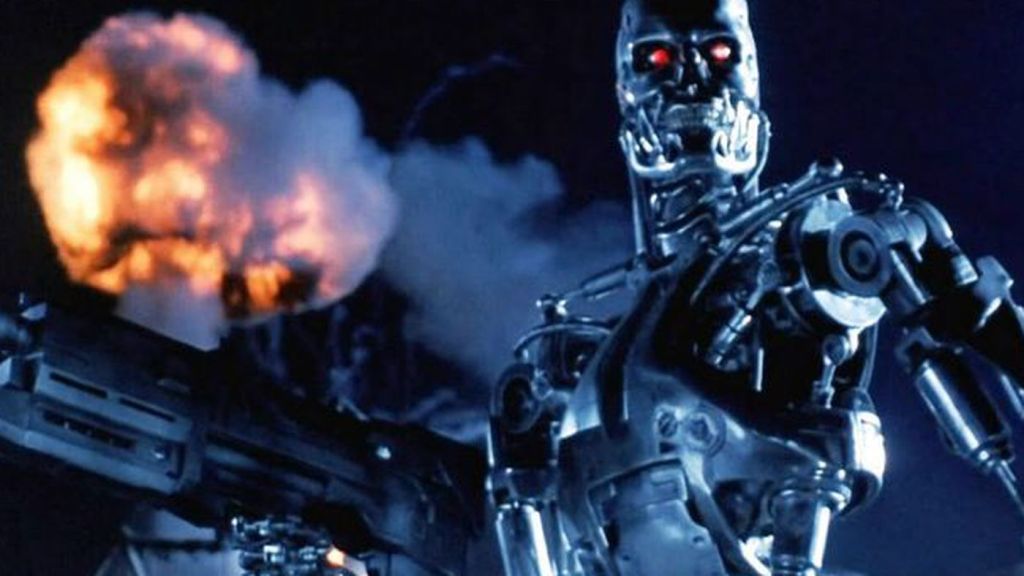

Thirty years ago one of the greatest sequels of all time was released, when James Cameron’s Terminator 2: Judgement Day, sometimes called T2, appeared in theaters. Coming off the success of the first Terminator film as well as another classic sequel Aliens, Cameron reintroduced audiences to his nightmarish future world where the planet was taken over by Skynet, a supercomputer gone rogue that was attempting to wipe out the remnants of humanity. As with the first film, the beginning of Terminator 2: Judgement Day shows human resistance forces led by John Connor in a pitched battle featuring colossal hunter killer machines against a ragtag group of human fighters. This is certainly one of the highlights of the film that really hasn’t been matched by later Terminator films.

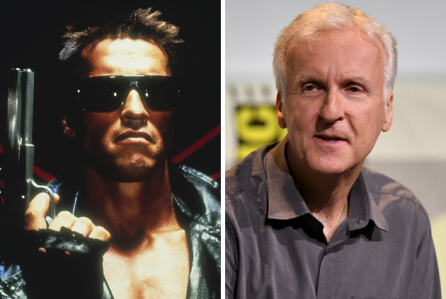

The film subsequently moves to the present where the terminator sent back in time (played by Arnold Schwarzenegger at the height of his career) arrives, this time to protect John Connor as a ten year old. The change of Schwarzenegger from villain in the first film to hero was risky, but it pays off as he and John Connor (Edward Furlong) have a great rapport, with the emotionless killer cyborg learning about what it means to be human from the sarcastic, but strong child. His strength, obviously came from his mother Sarah Connor, played brilliantly by Linda Hamilton.

Her character also has a dramatic change from the first film, where she was an innocent bystander who then transformed into a warrior willing to do anything to protect her child, knowing he is the savior of humanity. Her reunion with her son, and with the machine of her nightmares is a highlight, as is their first encounter in a mental health institution with the iconic T-1000 (Robert Patrick), a liquid metal killer cyborg that ruthlessly hunts down John. The nearly silent and deadly T-1000 is an interesting contrast to Schwarzenegger’s hulking T-800 model. Our heroes’ journey takes them south of the border and finally back to where it all started at Cyberdyne Systems, the place where Skynet itself was created, as they attempt to stop the nuclear war and rise of the machines from ever taking place. The final battle with the T-1000 at a steel mill is another thrilling highlight in a movie filled with show-stopping scenes, as the T-800 makes the ultimate sacrifice for the benefit of all humanity, having learned from John about humans in general.

The theme of what it means to be human permeates this film and raises it past the level of just another cool action movie. From Sarah confronting her nightmares of the future and almost losing her humanity in trying to commit murder to change the future, to John seeing his machine protector as a father figure, to the terminator itself telling John at the end that he knows why humans cry, even if he could never do it. T2 has so much to say about the future of humankind and how our fates are not set in stone. This directly affects events in the film when the T-800, John and Sarah attempt to destroy Skynet with the help of its creator Miles Dyson (Joe Morton), who realizes his future creation will result in a nuclear holocaust and threaten humanity with extinction.

Having said all that, the film also has a well deserved reputation as a fantastic and influential action movie, with incredibly exciting stunts and special effects that revolutionized the genre. The “morphing” effect that brought the shape-shifting T-1000 to life forever changed how we saw what was possible in science fiction and films, in general. This directly led to the stunning dinosaur effects in Jurassic Park two years later, as well as other films that demonstrated that new worlds and creatures could be realized. The film also enshrined Terminator as a franchise, which in retrospect had mixed results. The direct follow up, Terminator 3: Rise of the Machines took the story to an interesting place and had a great ending, and the next film Terminator: Salvation finally showcased a future war that was hinted at in earlier films. But the most recent films, Terminator: Genisys and Terminator: Dark Fate were both reboots that were lacking, to put it mildly.

However, the franchise is still intact with a new anime series in development at Netflix, and a recently released video game Terminator: Resistance that is an excellent foray into the future war and leads right up to the opening sequence in T2, which is revealed to be the final battle between Skynet and John Connor’s forces before the terminators are sent back in time. All of these sequels, as well as the great and still-missed TV show Terminator: The Sarah Connor Chronicles, and the Universal Studios theme park attraction T2-3D: Battle Across Time used Terminator 2: Judgement Day as the springboard to new plotlines. That is because T2 did such a great job of showcasing its world of killer cyborgs and brave, yet flawed heroes fighting against a seemingly inevitable fate of death and destruction.

Whatever the future has in store for the Terminator franchise, it can be certain that the influence and impact of Terminator 2: Judgement Day will always be felt, both for its epic scope and excitement, as well for its insights into at what makes us tick. That, along with its equally great predecessor, will keep this film going for another 30 years and beyond and keep it enshrined as not only a brilliant sequel, but a superior film in its own right.

C.S. Link